Executive Summary

Due to an increase in member turnover, more than the half of the admissions committee members have served for less than six months. More experienced members of the committee have a mental rubric that they use to score applications for medical school. Newer admissions committee members do not and are requesting one. The Association of American Medical Colleges has also been encouraging all medical schools to have a more standardized, reproducible, legally defensible screening procedure. Members of the admissions committee screen the 4000 applications that flood the department every academic year. Experienced members of the committee have developed mental rubrics that they utilize to screen the applications effectively and efficiently. New members of the committee do not have this skill yet and there are no tools available to help them. A workshop setting was planned to utilize the experience of the senior committee members to develop a rubric for screening applicants that could be utilized by all members of the committee. After conducting a needs analysis, many inconsistencies in the screening process were identified. Contributing factors may include: lack of experience of committee members; limited training time since all members are volunteers; subjective interpretation of the mission statement of the school; and the concern that diversity of the student body is of paramount importance even while consistency in screening is sought. To complicate matters a new admissions software will be utilized next cycle that will require all users to become comfortable screening with the software.

A workshop setting was ideal for the training since a routine introductory workshop is held at the beginning of each academic cycle to update the committee and to vote on new policies. Small groups were assembled to look at the applications and the mission statement with a goal of developing a rubric which would be used to identify applicant attributes that most closely align with each statement in the mission statement. After the consensus rubric was developed, the small groups scored a candidate application utilizing the rubric. These candidates had already been prescreened by a series of experienced admissions committee members to provide a control or standard. A previous sample of new committee members also screened applicants that were previously screened by experienced members to provide a baseline of consistency. Next an inexperienced committee member scored an applicant under the supervision and guidance of an experienced member and finally all admissions committee members scored an applicant using the Webadmit software.

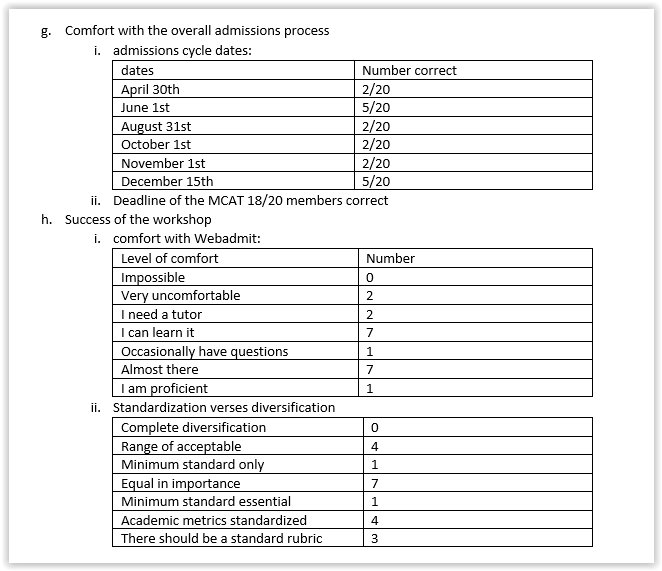

During the workshop pilot session, it took much longer to agree on a consensus rubric than anticipated. The end result was a rubric which provided several options for attributes that could meet a category identified from the mission statement. Due to insufficient time, much of the Webadmit training will need to be revisited in subsequent sessions. Committee members were able to meet the goal of scoring an applicant, but needed significant support from IT and staff to do so. The applications that were previously scored by the experienced committee members and subsequently screened by new committees had the following metrics. Two experienced committee members scored 10 applications. They were subsequently scored by new members of the admissions committee. The mean score when the experienced members screened them was 4.3 with a standard deviation of 2.1 and with the new members mean score was 4.0 with a standard deviation of 3.5. The results showed that older members were slightly more lenient and had a tighter range of scores than new members. a follow-up survey sent to attendees demonstrated that committee members needed further training on Webadmit, admissions cycle date and some aspects of the admissions process, but clearly understood and were proficient at using the application to find needed attributes and data as well as utilizing a rubric.

Admissions committee meets every two weeks. The findings of this report should be shared with them, so they can discuss what level of consistency in scoring is their benchmark and whether it was achieved in this workshop. Committee members were not comfortable with the Webadmit software, so a series of pamphlets should be made that aid in each step in the screening process. Contact information should be given for the IT and admissions staff, so all committee members have ready access to assistance with the Webadmit software. Each aspect of the rubric should be revisited in small chunks to further clarify goals and improve ease of utilization. Training in using the rubric should be incorporated in the new member orientations sessions.

A workshop setting was ideal for the training since a routine introductory workshop is held at the beginning of each academic cycle to update the committee and to vote on new policies. Small groups were assembled to look at the applications and the mission statement with a goal of developing a rubric which would be used to identify applicant attributes that most closely align with each statement in the mission statement. After the consensus rubric was developed, the small groups scored a candidate application utilizing the rubric. These candidates had already been prescreened by a series of experienced admissions committee members to provide a control or standard. A previous sample of new committee members also screened applicants that were previously screened by experienced members to provide a baseline of consistency. Next an inexperienced committee member scored an applicant under the supervision and guidance of an experienced member and finally all admissions committee members scored an applicant using the Webadmit software.

During the workshop pilot session, it took much longer to agree on a consensus rubric than anticipated. The end result was a rubric which provided several options for attributes that could meet a category identified from the mission statement. Due to insufficient time, much of the Webadmit training will need to be revisited in subsequent sessions. Committee members were able to meet the goal of scoring an applicant, but needed significant support from IT and staff to do so. The applications that were previously scored by the experienced committee members and subsequently screened by new committees had the following metrics. Two experienced committee members scored 10 applications. They were subsequently scored by new members of the admissions committee. The mean score when the experienced members screened them was 4.3 with a standard deviation of 2.1 and with the new members mean score was 4.0 with a standard deviation of 3.5. The results showed that older members were slightly more lenient and had a tighter range of scores than new members. a follow-up survey sent to attendees demonstrated that committee members needed further training on Webadmit, admissions cycle date and some aspects of the admissions process, but clearly understood and were proficient at using the application to find needed attributes and data as well as utilizing a rubric.

Admissions committee meets every two weeks. The findings of this report should be shared with them, so they can discuss what level of consistency in scoring is their benchmark and whether it was achieved in this workshop. Committee members were not comfortable with the Webadmit software, so a series of pamphlets should be made that aid in each step in the screening process. Contact information should be given for the IT and admissions staff, so all committee members have ready access to assistance with the Webadmit software. Each aspect of the rubric should be revisited in small chunks to further clarify goals and improve ease of utilization. Training in using the rubric should be incorporated in the new member orientations sessions.

Evaluation Purpose

The purpose of this evaluation was to determine the most efficient and effective way to make screening medical school applicants more consistent. All medical schools in the United States have developed a screening process based on their school’s mission statement. This screening process must be consistent, fair, and legally defensible while acknowledging that all screeners have biases and beliefs that cloud their interpretation of the mission statement. Each applicant to medical school comes from a different educational, social, and cultural background. In addition, a paper application can only give so much information. The purpose of the screen is to determine who should be selected for the 400-450 interview slots that are available each year.

The purpose of this evaluation and subsequent workshop was to make the process of screening as transparent as possible when developing a screening rubric based on the school’s mission statement. The workshop setting allowed all admissions committee members to express their beliefs and screening preferences. The goal was to provide enough structure so that goals could be met, but enough flexibility so each member felt that their opinions had been heard and incorporated in the final product.

Evaluation Issues

The purpose of this evaluation and subsequent workshop was to make the process of screening as transparent as possible when developing a screening rubric based on the school’s mission statement. The workshop setting allowed all admissions committee members to express their beliefs and screening preferences. The goal was to provide enough structure so that goals could be met, but enough flexibility so each member felt that their opinions had been heard and incorporated in the final product.

Evaluation Issues

- Can admissions committee members log into Webadmit, find their screening assignments, read the application online and then provide a screening score using the Webadmit software?

- Can the key attributes in a candidate’s application be identified and then aligned with the school mission statement?

- Are all committee members familiar with the prerequisites for admission to the school?

- Are all committee members familiar with the minimum requirements for admission to the school?

- Are admissions committee members comfortable with what attributes are required to rate or score a candidate as a yes, high maybe, maybe, or no?

- Are all admissions committee members familiar with where information can be found in the medicals school application?

- Are committee members comfortable with the overall process of admitting a student to medical school?

Methodology

The target population for this workshop were the newer members of the admissions committee, but required all admissions committee members to be present and contribute. Members of the admissions committee are physicians, scientists, community members and admissions staff who all have a vested interest in the admissions process. Each member of the committee has either been elected or appointed by the dean of the medicals school. Leading up to the workshop background material was presented at the biweekly admissions committee meetings and there is a plan to continue to revisit the rubric, scoring applicants and the use of the Webadmit software throughout the next academic cycle.

The workshop was scheduled by the admissions coordinator after a survey was sent to all committee members requesting meeting availability preferences. An all-day workshop was scheduled with two sessions planned before lunch and then two after lunch. Since small group problem solving was key to success, the admission coordinator requested RSVPs from all participants and then worked with the director of admissions to select which members would be placed in each group. An overview of the workshop was sent which included the following goals: learn to use Webadmit software for screening applicants, develop a rubric to match applicant attributes with key statements in the mission statement, increase familiarity with the content found in each category of the medicals school application, review the prerequisites and minimum requirements for admission to medical school, discuss and then utilize a rubric to practice scoring applicants within the small group, with a partner and then using the Webadmit software.

Effectiveness of the workshop was ascertained by a survey testing the knowledge gained from the workshop as well as participant perception of the success of the workshop at meeting goals. The survey was sent in an email to all participants on the conclusion of the workshop. The link to the survey, directions on how to use Webadmit, and the consensus rubric were also included.

Link to the survey:

Workshop Survey

Can admissions committee members log into Webadmit, find their screening assignments, read the application online and then provide a screening score using the Webadmit software?

The workshop was scheduled by the admissions coordinator after a survey was sent to all committee members requesting meeting availability preferences. An all-day workshop was scheduled with two sessions planned before lunch and then two after lunch. Since small group problem solving was key to success, the admission coordinator requested RSVPs from all participants and then worked with the director of admissions to select which members would be placed in each group. An overview of the workshop was sent which included the following goals: learn to use Webadmit software for screening applicants, develop a rubric to match applicant attributes with key statements in the mission statement, increase familiarity with the content found in each category of the medicals school application, review the prerequisites and minimum requirements for admission to medical school, discuss and then utilize a rubric to practice scoring applicants within the small group, with a partner and then using the Webadmit software.

Effectiveness of the workshop was ascertained by a survey testing the knowledge gained from the workshop as well as participant perception of the success of the workshop at meeting goals. The survey was sent in an email to all participants on the conclusion of the workshop. The link to the survey, directions on how to use Webadmit, and the consensus rubric were also included.

Link to the survey:

Workshop Survey

Can admissions committee members log into Webadmit, find their screening assignments, read the application online and then provide a screening score using the Webadmit software?

- Question 6 and 8 assess an admissions committee member’s understanding of a key feature in the Webadmit software.

- Question 16 tests the necessary information to log on to Webadmit

- Question 19 tests an important convenience feature in Webadmit

- Question 20 tests the ability to find screening assignments

- Question 3,5, 13 assess an understanding of the value of components of the medical school application

- Question 15 tests a learner’s understanding of the experience section of the application which is necessary to match attributes to mission

- Question 21 tests the ability of a committee member to know the attributes used to assess alignment to the mission statement as well as articulate an understanding of why they are important.

- Question 1 and 7 assess requirements for admission

- Question 18 tests an understanding that biochemistry is not required yet, but will be.

- Question 2 assesses minimum requirements

- Question 4 assesses an understanding of a need to meet minimum requirements before receiving a secondary application.

- Question 14 provides a comprehensive list of key attributes and tests a learner’s understanding of what these attributes mean

- Question 15 tests an understanding of experiences that applicants submit demonstrating that they meet required attributes.

- Question 22, 23 and 24 test an understanding of a differentiating factor when scoring an applicant

- Question 25 assesses an understanding of the overall approach to scoring that is required by the school, dean, and committee.

- Question 29 demonstrates an ability to use the rubric to score and applicant

- Question 9 and 10 assess an understanding of the main categories of the application

- Question 11 assesses an understanding of the acronyms frequently used in the application process

- Question 12 assesses an understanding of the admissions process.

- Question 17 tests an important deadline in the application cycle

- Question 26 assesses participants comfort with Webadmit

- Question 27 will be used to correlate the feelings of the workshop participants to the success in developing a standardized rubric.

- Question 28 evaluates the main goal of the workshop

- Question 30 assesses participants comfort with the rubric and gauges whether committee members are likely to use the rubric in scoring after the workshop.

Results of the Workshop

The results of the Qualtrics survey were tabulated and all essay questions were read. Committee members who did not complete the survey in 72 hours were sent one reminder email.

ii.understanding of clipboard and admissions actions: 2/20 correct

iii.ability to use grade point feature to pull out parts of GPA 0/20 correct

iv.ability to find applicants on Webadmit for screening 19/20

2. Questions on key attributes and alignment with mission

i.understanding of BCPM GPA 20/20 correct

ii.understanding of location of academic metrics 20/20 correct

iii.Understanding of subsections of MCAT score 18/20 correct

iv.Understanding of experiences: 19/20 all correct, 1 participant confused 2 experiences

v.Question 21 will be utilized to assess correlation between attributes listed by survey participants and the one listed in the rubric. These results will be discussed at an upcoming committee meeting. After the committee meeting, any conclusions drawn will be shared. 17/20 completed questions

3. Questions of prerequisites for admission to the school

i.all 3 questions (1,7,18) were 20/20 for committee members

4. Questions on minimum requirements for admission to the school

i.Both questions 20/20

5. Admissions committee members comfort with attributes required to rate or score a candidate as a yes, high maybe, maybe, or no.

i.Question 14- comprehensive list of attributes- 20/20 for members

ii.Question 15- members could match experiences with definitions 20/20

iii.Question 22- there was no consensus among survey takers on the activity that best demonstrates meeting a required attribute. The three highest scoring were: working in a free clinic 5/20, scribe 3/20 and EMT 2/20.

iv.Question 23, 24, 25- opinion questions, results will be compiled by admissions director and disseminated to committee members. These questions were not to test knowledge, but to provide an overview of the thoughts and feelings of the committee members.

v.Question 29- 18/20 members granted an interview and 2/20 felt that the candidate would likely be accepted.

6. Admissions committee members familiarity with where information can be found in the medical school application.

i.Question 9- main categories 20/20

ii.Question 10- main categories 20/20

iii.Question 11- acronyms 15/20 got 2 or more correct, 12/20 got 3 or more correct and 3/20 got all 4 correct

- Development of a consensus rubric. Participants in the workshop identified six main categories that they would utilize when scoring an applicant: dedication to humanity, interpersonal skills, maturity, motivation to pursue a career in medicine, academic factors and letters or recommendation. They were able to identify attributes found in the application which would fit under each of these categories, however, they were not consistent and most felt that applicants could not be fully evaluated without the interview step. Most committee members agreed on a minimum of five attributes for each section that would fully or partially demonstrate that the applicant displayed the qualities sought by the committee.

- 27/30 members of the admissions committee participated in the workshop and all 27 stayed until the end.

- 20/30 members of the admissions committee completed the survey with the following results

- Questions on Webadmit:

ii.understanding of clipboard and admissions actions: 2/20 correct

iii.ability to use grade point feature to pull out parts of GPA 0/20 correct

iv.ability to find applicants on Webadmit for screening 19/20

2. Questions on key attributes and alignment with mission

i.understanding of BCPM GPA 20/20 correct

ii.understanding of location of academic metrics 20/20 correct

iii.Understanding of subsections of MCAT score 18/20 correct

iv.Understanding of experiences: 19/20 all correct, 1 participant confused 2 experiences

v.Question 21 will be utilized to assess correlation between attributes listed by survey participants and the one listed in the rubric. These results will be discussed at an upcoming committee meeting. After the committee meeting, any conclusions drawn will be shared. 17/20 completed questions

3. Questions of prerequisites for admission to the school

i.all 3 questions (1,7,18) were 20/20 for committee members

4. Questions on minimum requirements for admission to the school

i.Both questions 20/20

5. Admissions committee members comfort with attributes required to rate or score a candidate as a yes, high maybe, maybe, or no.

i.Question 14- comprehensive list of attributes- 20/20 for members

ii.Question 15- members could match experiences with definitions 20/20

iii.Question 22- there was no consensus among survey takers on the activity that best demonstrates meeting a required attribute. The three highest scoring were: working in a free clinic 5/20, scribe 3/20 and EMT 2/20.

iv.Question 23, 24, 25- opinion questions, results will be compiled by admissions director and disseminated to committee members. These questions were not to test knowledge, but to provide an overview of the thoughts and feelings of the committee members.

v.Question 29- 18/20 members granted an interview and 2/20 felt that the candidate would likely be accepted.

6. Admissions committee members familiarity with where information can be found in the medical school application.

i.Question 9- main categories 20/20

ii.Question 10- main categories 20/20

iii.Question 11- acronyms 15/20 got 2 or more correct, 12/20 got 3 or more correct and 3/20 got all 4 correct

Conclusion and Recommendations

Overall the workshop seemed to be at least partially successful with all committee members. Most committee members who attended felt that the workshop was good use of time and they felt it was necessary to have a discussion on consistency of screening applicants. Most of the committee members could at least log on and find the applicants they need to screen on Webadmit. No one was completely proficient after the workshop. Webadmit training will need to be repeated in small segments throughout the admissions cycle. A pamphlet demonstrating the key steps and providing contact information for staff and IT members will be provided.

Overall, all committee members seemed to know the attributes, prerequisites, and minimum requirements for the school. The prerequisites and minimum requirements have been covered in admission committee meetings prior to the workshop. This finding demonstrates that presenting small amounts of content beforehand is effective and will continue to be done. The director of admissions plans to use the first 15-20 minutes of each committee meeting to revisit some of the content discussed at the workshop.

Committee members seem to have a good understanding of the attributes they are looking for in an applicant to medical school. Notes from the workshop as well as answers to some of the essay questions will be processed by the director of admissions and used for topics in future admission committee meetings.

All committee members seem to be comfortable with the categories and placement of these categories in the medical school application, however, most were unfamiliar with common acronyms used. An awareness of this is important when presenting information in the future. Committee members were also unfamiliar with important dates in the admissions process, so signage will be made and displayed in the admissions committee room to help with this.

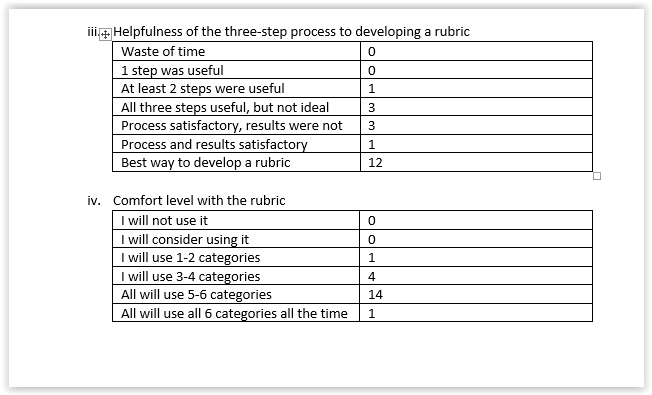

Overall evaluation of the workshop success at meeting goals demonstrated that participants were not comfortable with Webadmit and further training will be required. Standardization verses diversification is a topic that needs further research and discussion. The three-step method to developing a rubric was overall felt to be successful and worth the time it took to develop it. The more experienced members of the committee appreciated the opportunity to mentor the newer members. Most admissions committee members plan to utilize the rubric consistently when screening applicants until it becomes second nature. Now that a rubric has been developed and some of the more subjective aspects of the application have been debated and discussed, I recommend that the director of admissions continue with her plan to revisit the content at the beginning of each admissions committee meeting. She will review the admissions timeline, acronyms, and utilization of the Webadmit software. The rubric should become part of the orientation process for new members and periodically it should be reviewed to determine if any updates need to be made.

Future workshops should include a series of questions for the small groups to answer and guide them towards the goal of the workshop. Even with IT and admissions staff moving through the room it was difficult to keep all small groups on track. Some small groups clearly had members who were vociferously defending their points of view which caused newer members to withdraw. Some of the more experienced committee members were not matched with a newer member and this led to down time for them. More careful attention to this needs to be paid next time. Software training at the end of the day was also not ideal. This section of the workshop should be moved to short segments in the admissions committee meetings instead.

Source for Process:

Brown, A. H., & Green, T. D. (2016). The essentials of instructional design (3rd edition). New York, NY: Routledge.

Overall, all committee members seemed to know the attributes, prerequisites, and minimum requirements for the school. The prerequisites and minimum requirements have been covered in admission committee meetings prior to the workshop. This finding demonstrates that presenting small amounts of content beforehand is effective and will continue to be done. The director of admissions plans to use the first 15-20 minutes of each committee meeting to revisit some of the content discussed at the workshop.

Committee members seem to have a good understanding of the attributes they are looking for in an applicant to medical school. Notes from the workshop as well as answers to some of the essay questions will be processed by the director of admissions and used for topics in future admission committee meetings.

All committee members seem to be comfortable with the categories and placement of these categories in the medical school application, however, most were unfamiliar with common acronyms used. An awareness of this is important when presenting information in the future. Committee members were also unfamiliar with important dates in the admissions process, so signage will be made and displayed in the admissions committee room to help with this.

Overall evaluation of the workshop success at meeting goals demonstrated that participants were not comfortable with Webadmit and further training will be required. Standardization verses diversification is a topic that needs further research and discussion. The three-step method to developing a rubric was overall felt to be successful and worth the time it took to develop it. The more experienced members of the committee appreciated the opportunity to mentor the newer members. Most admissions committee members plan to utilize the rubric consistently when screening applicants until it becomes second nature. Now that a rubric has been developed and some of the more subjective aspects of the application have been debated and discussed, I recommend that the director of admissions continue with her plan to revisit the content at the beginning of each admissions committee meeting. She will review the admissions timeline, acronyms, and utilization of the Webadmit software. The rubric should become part of the orientation process for new members and periodically it should be reviewed to determine if any updates need to be made.

Future workshops should include a series of questions for the small groups to answer and guide them towards the goal of the workshop. Even with IT and admissions staff moving through the room it was difficult to keep all small groups on track. Some small groups clearly had members who were vociferously defending their points of view which caused newer members to withdraw. Some of the more experienced committee members were not matched with a newer member and this led to down time for them. More careful attention to this needs to be paid next time. Software training at the end of the day was also not ideal. This section of the workshop should be moved to short segments in the admissions committee meetings instead.

Source for Process:

Brown, A. H., & Green, T. D. (2016). The essentials of instructional design (3rd edition). New York, NY: Routledge.